Building automation workflows often feels deceptively similar to writing standard application code. We look at logic blocks and data inputs, assuming the testing paradigms that served us well in software development will simply transfer over. However, traditional software usually lives within a relatively controlled memory space where state management is internal. Workflow automation is a whole different ballgame because it's largely dependent on keeping a dozen external systems in line. We deal with a distinct kind of volatility here where we are essentially hoping that a dozen disparate SaaS tools are functional at the exact moment our trigger fires.

The financial consequences of ignoring this instability are difficult to overstate. When production workflows fail (they often do so suddenly), unplanned downtime is estimated to cost global 2000 companies a whopping 400 billion dollars annually. We really need to have some confidence that the entire operation is running smoothly. But it often comes down to the weakest link being the one that brings the whole system crashing down.

The Testing Pyramid for Workflows

Applying the classic testing pyramid to automation requires a slightly different perspective than standard software development. We know that catching a defect in the production phase costs 100 times more than fixing it during design. So, we prioritize layers based not just on code stability, but on data flow reliability. You must treat the pipeline as a living entity where the movement of data carries as much weight as the logic processing it.

Unit tests for individual steps

We start by isolating specific logic blocks. Take a script meant to format a date string. It needs to work on its own terms, so we strip away the internet connection and database to verify it. Testing everything together feels faster initially but usually results in expensive headaches.

- Verify logic in absolute isolation.

- Avoid the high cost of later data cleanup by catching bugs early.

Integration tests for connected systems

Does the CRM actually accept the JSON payload you constructed? Automation relies heavily on gluing systems together, so verifying the connection points is non-negotiable. We confirm that authentication tokens are valid and schemas match. While these tests run slower than unit checks, they catch configuration drifts that distinct logic tests miss.

- Confirm the "glue" between platforms holds firm.

- Detect interface changes before they break the pipeline.

- Validate data payloads against real external endpoints.

End-to-end Workflow Tests

At the peak, complex simulations mimic the full lifecycle of a trigger event. These are high-stakes checks. External timing issues make them fragile, so we use them sparingly. You reserve this heavy lifting for critical paths where failure causes significant operational pain.

Balancing Test Coverage and Execution Time

Resource management determines the success of your QA strategy. A test suite that takes hours to run silences itself because we eventually stop waiting for the results. Confidence requires comprehensive coverage, but velocity demands speed.

- Target the highest risks to maximize efficiency.

- Keep feedback loops short to ensure tests actually get run.

- Prune slow or brittle tests that provide low value.

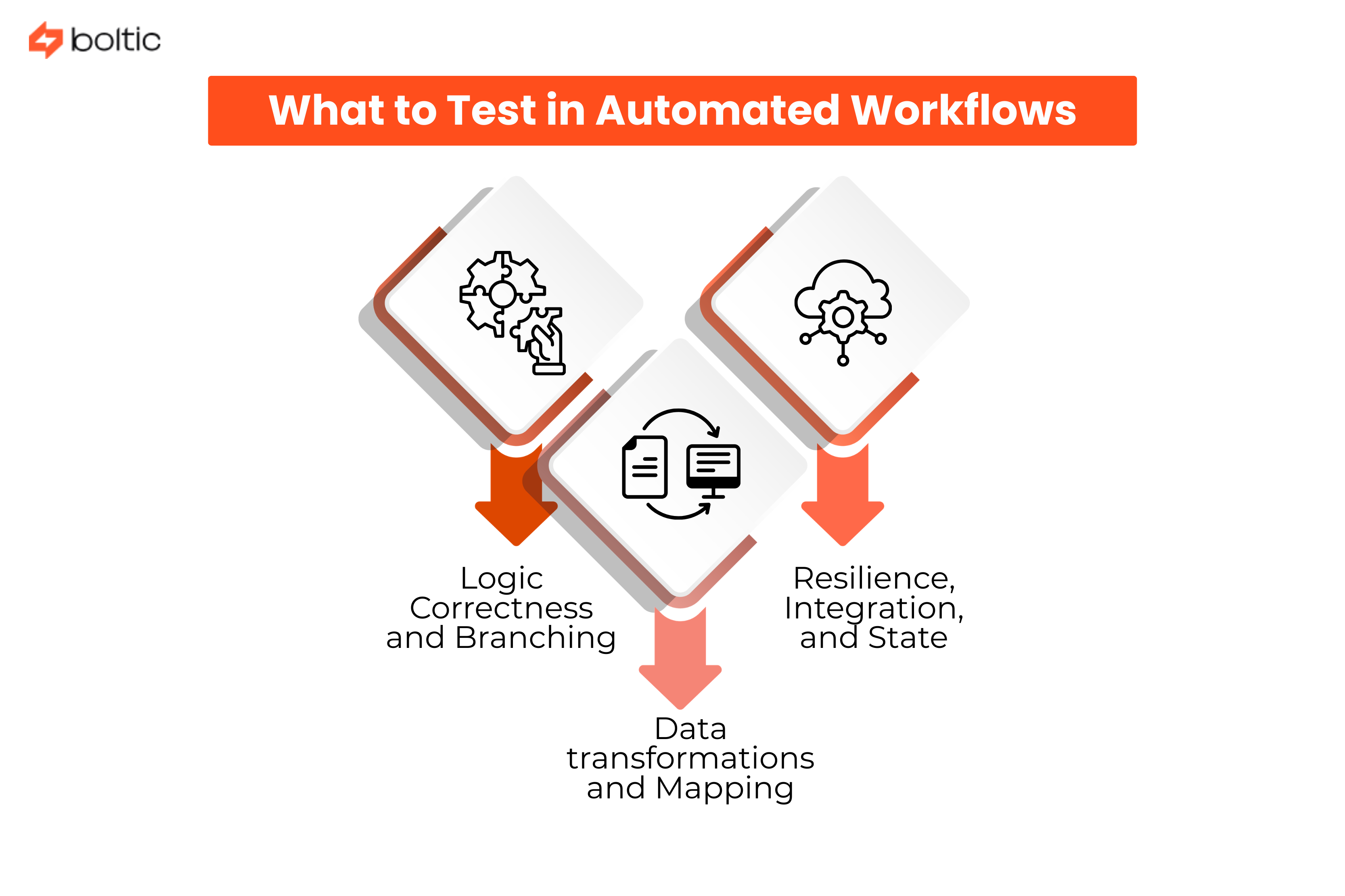

What to Test in Automated Workflows

Identifying the components most liable to fracture dictates your testing strategy. You need to identify where logic splits, where data changes shape, and how the system behaves when the lights flicker.

Logic correctness and Branching

Conditional branching demands immediate scrutiny because variable inputs define the route. Consider a workflow for sales leads: a high score ignites a fast-track sequence, while lower numbers route the lead to a nurturing campaign. We verify that the specific trigger consistently activates the correct path. You must confirm that no logic branch terminates in a dead end.

- Validate execution paths for all distinct triggers.

- Ensure default behaviors exist for unexpected input ranges.

Data transformations and Mapping

Automation frequently acts as the translation layer between tools speaking different data dialects. We inspect this fidelity closely. Upstream systems might unexpectedly alter a date format, or an incoming payload could contain null values and special characters. Your code must adapt to these irregularities without crashing the entire pipeline.

- Test mapping logic against known edge cases.

- Confirm data integrity remains intact across tool boundaries.

Resilience, Integration, and State

The "happy path" rarely causes insomnia. We focus instead on building resilience by simulating chaos. Force a server to return a 500 error or let a network request time out. You need to observe how the automation handles the rejection. Does the script back off with calculated delays, or does it aggressively hammer the endpoint until the third-party API blocks it? Throughput efficiency often degrades during these retry loops, so we monitor performance impact closely.

Security and consistency finish the list. Authentication flows require validation to ensure unauthorized users remain locked out. Finally, we examine state management through idempotency checks. If a process gets interrupted, restarting it should never result in duplicate actions.

- Verify backoff strategies prevent API rate-limiting.

- Confirm that re-running a specific transaction produces the same result without duplication.

- Stress-test authentication gates against invalid credentials.

Validating Logic with Unit Tests

Strict isolation governs the quality of your verification process and that requires you to peel off distinct code blocks from the environment to test their singular functionality. We ignore the database and internet connection initially to confirm the script works on its own terms.

Isolating individual steps and functions

We define success by how well a function performs in a vacuum. A script designed to calculate sales tax requires verification using fixed inputs to ensure the outputs match mathematical expectations. This methodology keeps execution speeds high. Failures here indicate an actual logic bug rather than an ephemeral glitch in the internet connection.

- Verify core mechanics without external interference.

- Prioritize speed to encourage frequent testing.

Mocking external dependencies

Mocking grants you control over the uncontrollable. We simulate the outside world by creating fake API responses. When the workflow attempts to fetch customer details, the test environment serves a pre-defined JSON object immediately. This technique prevents the test from failing due to unrelated third-party outages. You also gain the ability to reproduce rare scenarios easily.

- Inject fake responses to simulate 404 errors or timeouts.

- Decouple test success from third-party uptime.

Testing data transformations

The parser must handle whatever we throw at it. We stress the system by intentionally injecting bizarre inputs into the data stream. Japanese characters or random emojis frequently break standard encoding. Massive text strings get fed into the pipeline to uncover buffer issues or truncation errors.

- Input special characters to test encoding resilience.

- Ensure malformed data triggers appropriate error handling.

Validating business logic

Correctness remains the primary objective and logic errors often hide in edge cases. We look specifically for "off-by-one" errors that sneak past casual glances during code review. Your automated checks must verify that business rules hold up when parameters hit their upper and lower limits.

Code coverage metrics

Intuition often fails to identify gaps, so we rely on data. Code coverage metrics highlight the logic parts that have not been exercised. We use these heatmaps to uncover dangerous assumptions lurking in the codebase. Identifying these blind spots ensures we haven't left critical paths unguarded.

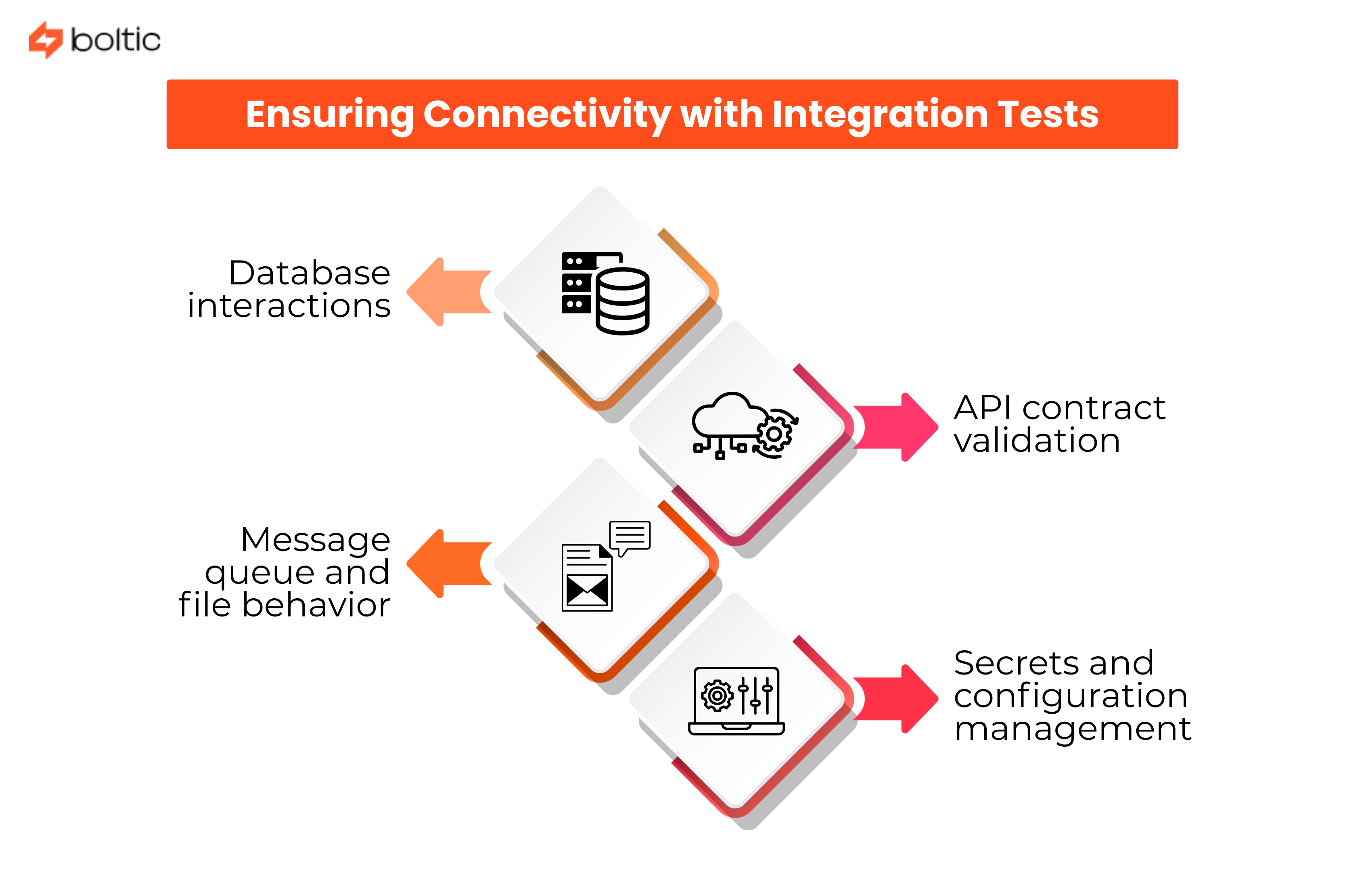

Ensuring Connectivity with Integration Tests

Logic frequently holds up in a vacuum only for the system to crumble at the seams. Integration testing validates the critical handshake between your code and the broader environment. Receiver rejection renders a perfectly valid JSON object useless, so you must confirm that the interface actually functions as a bridge.

Database interactions

We cannot rely on mocks when testing data persistence rules. Real constraints bite hard. Spinning up temporary containerized databases allows us to simulate the environment accurately. This approach verifies that write operations respect specific column types and limits. You also need to confirm that transactions commit or roll back intentionally during failure states to preserve integrity.

- Verify write operations against actual schema constraints.

- Ensure transaction rollbacks function correctly during errors.

API contract validation

Third-party platforms shift their ground constantly. Field definitions drift without notice. We treat API contract validation as an essential early warning system. You run these tests on a rigid schedule to catch changes before they corrupt your data. The goal involves ensuring the response body actually matches the structure your downstream tools require.

- Detect silent changes in third-party schemas.

- Validate that external APIs return expected data structures.

Message queue and file behavior

Message queues and file triggers act as the invisible threads holding the process together. Verification is mandatory here. You must prove that placing a message on the queue actually wakes the intended consumer service. We also test that a file dropped into a storage bucket effectively initiates the correct processing flow. These background processes often fail silently without explicit checks.

- Confirm queue messages successfully trigger consumers.

- Test that storage events start the processing pipeline.

Secrets and configuration management

Security integration frequently gets overlooked until production deployment. The pipeline serves no purpose if it remains locked out. We check that the system retrieves keys from the vault and authenticates successfully. Expired credentials often masquerade as complex application errors, so validating the retrieval process saves significant debugging time.

- Check connectivity to secure credential vaults.

- Confirm that retrieved keys actually permit access.

Simulating Real World Scenarios via End-to-End Tests

You see the architecture through the eyes of a user by simulating a full production journey. We verify the system as a unified whole to ensure seamless operation and every internal layer must communicate effectively with the others.

Simulating production scenarios

A web form submission often serves as the initial trigger for these deep dives. From that moment, you should watch the ripple effect through the entire technical stack.

- Tools that intercept emails provide the evidence needed to confirm the process completed every step accurately.

Proving system cohesion remains the primary goal here.

Test data management strategies

Safety is a priority when you handle sensitive information. We recommend creating synthetic records that reflect the scale of your actual user base. Privacy is maintained by using these artificial data sets.

Sandbox and test environment setup

A replica of the production stack allows for the safe creation and destruction of records. Alignment between these spaces requires constant vigilance from your team.

- Configuration drift remains a threat to test validity.

Success in the sandbox offers little assurance for the live environment if the configurations diverge. Parity between the two worlds must be preserved.

Handling asynchronous workflows

Because many modern workflows are asynchronous, the test runner cannot simply exit after firing the initial trigger. We suggest implementing polling mechanisms that check for completion signals. Listen for specific events to move the test forward.

Testing long-running processes

Tasks taking hours require tests that check status endpoints periodically. State must be maintained correctly across the entire timeline by the orchestration layer. You build resilience when you verify these extended sequences over time.

Multi-system orchestration validation

Final database states are queried to prove the logic held up. Accuracy across the board is the ultimate goal for any developer.

- Intercept outgoing messages for content verification.

- Inspect database records for integrity.

- Confirm status codes at every transition point.

Managing Modern API Dependencies

Modern automation depends entirely on robust interfaces. In an era with 74% of developers describing themselves as "API-first" and AI-driven traffic surging, verifying these points of contact becomes mandatory for any serious engineer. We focus on the health of these connections because failures in the live environment must be prevented at all costs. A comprehensive testing strategy ensures that every potential failure point is accounted for before code reaches production.

Contract testing with third-party APIs

Rigid agreements between workflows and the services they consume are established through contract testing. If a provider alters their schema in a way that violates the established contract, a failure should occur immediately. You gain the opportunity to adapt before production breaks when these boundaries are strictly defined.

- Contracts define the expected data structures.

- Automated failures provide early warnings for breaking changes.

Handling API versioning and changes

Validation of real-world API behaviors compatibility with specific endpoints is necessary when an API offers multiple versions. We frequently test the migration paths to ensure that transitions remain smooth across different releases. You must confirm that your automation points to the correct version at all times to maintain data integrity.

Rate limiting and throttling simulation

Throttling is simulated by forcing mock servers to return HTTP 429 errors. You are verifying that the workflow respects the retry headers. The system remains stable when execution pauses during a throttle event.

- Retry-After headers guide the timing of the next request.

Testing error responses and edge cases

Authentication flows require special attention when you encounter a 401 Unauthorized response. We recommend that your automation handles token refreshes automatically to maintain continuity. Resilience is built by anticipating these common failures.

OAuth and authentication flows

Security tokens must be managed with precision within your authentication logic. You verify the handshake process to ensure that access remains consistent even as credentials expire. Managing these identities correctly prevents downtime during critical automated tasks.

Webhook testing and validation

Mock payloads are fired at your endpoints to verify that signatures are validated correctly. You want the system to reject any requests that lack the proper digital proof.

- Signatures are checked for authenticity.

- Malicious requests are identified and blocked immediately.

- Systems respond only to verified sources.

Data Testing Strategies

Input validation serves as the primary gatekeeper for your data integrity. We recommend that workflows stop immediately when they encounter incorrect information. This prevents the introduction of errors deeper into your architecture. Ensuring that data stays clean from the start is a priority we share with high-performing teams.

Input validation and sanitization

Should a required field be missing or an email address appear malformed, the process halts. You want the system to reject these inputs immediately.

Schema validation

JSON structures are verified using dedicated validation libraries to ensure the incoming data is structured correctly. We check the structural integrity and the specific details of the payload.

- Business logic is confirmed by checking that delivery dates follow order dates.

Data quality checks

Bad data is intentionally injected by us to observe the system response. Future dates or negative prices are entered to test the robustness of your validation logic. Significant financial losses often stem from these overlooked quality issues.

Handling missing or malformed data

The system remains stable by identifying and rejecting invalid entries, and we emphasize strict checks. The price of not paying attention to data quality is a massive one, we're talking $12.9 million dollars down the drain each year.

Testing data privacy and masking

Sensitive identifiers must be masked or encrypted during transmission. We utilize audit logs in the test environment to confirm that no private information is recorded.

- Passwords and personal details are never written to logs.

- Encryption protocols are verified during every transfer.

Volume and boundary testing

Large files and long strings are sent to discover the point of failure. You are looking for the moment when resources are exhausted. Identifying these limits helps you determine the actual capacity of your infrastructure. Testing these boundaries is essential for long-term scalability.

Error Handling and Resilience Testing

You build robustness by intentionally introducing chaos into your architecture. Taking specific services offline during execution reveals how your workflow reacts. We aim for a safe failure to prevent the system from entering a zombie state. Resource consumption should stay within expected limits even during high instability.

Network interruption scenarios

Severing connections during a live run exposes hidden vulnerabilities in your stack.

- Connections are dropped at random intervals.

- Traffic flow is monitored for unexpected spikes.

Timeout and retry logic validation

API calls that hang indefinitely can cripple your infrastructure if left unchecked. Establishing predefined limits to cut these connections automatically is mandatory. We find that a well-calibrated timeout prevents a single slow dependency from dragging down the entire system.

Dead letter queue testing

Safety nets are tested by forcing messages to fail repeatedly. You verify that these failed items are routed to a separate queue for manual inspection. This mechanism ensures that no data is lost because immediate processing was impossible.

- Repeated failures trigger the routing logic.

- The dead letter queue is inspected for the missing entries.

Compensating transaction verification

Rollback logic is essential for maintaining consistency across complex distributed transactions. We trigger failures at the final step to see if earlier entries are deleted or voided. You are looking for a perfectly reversed state. A failure late in the process initiates the compensation sequence immediately.

Circuit breaker behavior

Dependencies that stay down should not be hammered with endless requests. You implement circuit breakers to allow both sides sufficient time to recover. Cascading failure across your entire network is prevented by observing the system as it stops trying to connect to a failing service.

Performance and Load Testing

Throughput benchmarking defines what normal operation looks like for your architecture. You establish a baseline to understand exactly how many executions the system sustains per minute. We see these metrics as the foundation for all future growth. Identifying the limits of your current setup is the first step toward building a resilient application.

Throughput benchmarking

Establishing a baseline allows us to measure your standard capacity. You define the specific volume of requests the system handles under typical conditions before moving to more aggressive checks.

Concurrent execution testing

Race conditions often hide within the quiet corners of your code until multiple processes fight for the same resources. We recommend flooding the system with requests to force these database locks into the open.

- Simultaneous requests reveal synchronization errors.

- Resource contention is measured under extreme pressure.

Resource consumption monitoring

Worker nodes can be crashed by memory leaks if a process fails to release RAM correctly. You should watch these resource levels during high-load tests to ensure long-term stability.

Bottleneck identification

Efficient optimization becomes possible once you locate the primary choke point. We often find that a slow external API or a struggling database limits the entire workflow. Identifying these hurdles allows for targeted improvements.

- Knowing where the system slows down is half the battle in performance tuning.

Scalability validation

Adding more hardware should ideally yield linear performance gains for your application. We test whether extra worker nodes actually increase output or if the bottleneck simply shifts to a different component. Extra resources must translate into real-world speed.

Cost projection under load

Financial surprises are avoided when you project cloud expenses at a high volume. A million executions might turn a minor inefficiency into a massive bill. We suggest calculating these costs early to prevent budget overruns. Your bottom line depends on how well you understand the relationship between code efficiency and infrastructure spend. Consistency between hardware performance and financial investment is essential for sustainable growth.

Testing Event-Driven Workflows

Event-driven architectures offer your integrations a level of agility that traditional models struggle to match. At Boltic, we see the power of these reactive flows every day through the way complex data movements happen in real time. Use dedicated test subscribers to listen for events across the infrastructure and make it certain that the publisher is verified to ensure the correct payload reaches the intended topic.

Event publishing and consumption

Capturing events as they traverse the broker confirms that your message flow remains healthy.

- Subscribers capture payloads for immediate verification.

- Topics receive the intended data without loss.

Message ordering and deduplication

Systems occasionally receive messages out of sequence in distributed environments. You can design scenarios where a shipping alert precedes an order to verify the logic remains sound. We test deduplication by sending identical event IDs twice. This ensures your financial records stay accurate by preventing double counting.

Event schema validation

Stability for downstream services is guaranteed through strict schema validation. Catching payload changes early prevents the kind of crashes that disrupt the entire ecosystem.

Consumer group behavior

You also want to observe how consumer groups distribute the load. We check that fan out mechanisms reach every listener as planned.

- Load balancing across groups is monitored for efficiency.

Replay and backfill scenarios

Replaying past events allows you to restore the state perfectly after any technical pause. This backfill process ensures that your system remains current without creating duplicates. We find that the ability to relive historical data is one of the greatest strengths of this architecture. You gain confidence by watching your system reconstruct its timeline accurately. This process allows your data to catch up safely while maintaining perfect integrity across the board.

Testing AI/ML Workflows

Intelligent workflows demand a departure from rigid assertions. You will find that LLMs introduce a natural variability which requires a more nuanced approach. And with 67% of respondents integrating AI into their dev cycles now, we need ways to deal with that variability. We embrace this behavior, along with its challenges, in platforms at Boltic because it allows for truly sophisticated automation workflows. Semantic assertions help you verify that the output contains the correct keywords.

Prompt and response testing

Establishing a golden set of inputs provides the foundation for your testing cycle. You determine the range of acceptable responses to ensure the intent is captured. We observe that sentiment analysis scores often fluctuate without indicating a system failure.

- Monitoring sentiment scores for broad trends provides a clearer picture of model health.

Handling non-deterministic responses

Through these intelligent bounds, consistency is maintained. Ambiguity is a characteristic of generative intelligence that you must manage. If a response suddenly shifts from positive to negative, the test flags a failure.

Quality metrics (accuracy, relevance)

To ensure relevance, we use a more advanced model to grade your production model. This judge provides an automated layer of oversight for your tasks. We look for accuracy in every response generated by the system to maintain high quality.

Bias and fairness testing

Explicit tests for bias are necessary to verify that your guardrails remain intact. You should challenge the model with tricky inputs to see how it handles difficult topics. Safety is a critical component of any intelligent workflow.

Regression detection for model changes

Swapping models involves a deep dive into your historical benchmarks. You need to ensure the new model maintains the quality of the previous one.

- New versions are compared directly against the golden set.

- Benchmarks provide evidence for a safe migration.

- Before a full release, performance gains must be documented.

Test Data Management

Good testing requires realistic data. If we use perfectly sanitized data to test a system that will process messy real-world inputs, we create false confidence.

Creating realistic test datasets

Messy inputs are embraced to mirror the complexity of your live environment. You build genuine confidence by including flaws and irregularities within your test data.

- Libraries generate thousands of fake identities that exercise your logic.

Data privacy and synthetic data generation

Safety is a non-negotiable requirement. Synthetic data that statistically resembles production records is created to protect actual users. This is a best practice we follow at Boltic, where these intelligent workflows integrate seamlessly while keeping private information secure.

Test data versioning

Inconsistent variables make debugging nearly impossible during a failed run. Reproducibility is ensured when you version your datasets to confirm the data remained unchanged.

Production-like test environments

Infrastructure as code allows us to spin up disposable setups. These environments mirror your production stack to provide accurate results.

- Fresh databases are built for every execution.

Data cleanup and isolation

Leftover records from previous sessions can corrupt your current results. Environments are binned immediately after a suite concludes to ensure you start every journey with a clean slate. Reliable outcomes are maintained through this strict isolation.

Continuous Integration for Workflows

Automation happens within the CI/CD pipeline, and instant feedback on every modification is gained. Boltic supports this lifecycle by managing the orchestration of complex tasks, automation lifecycle management, and various external system integrations. We have to set these tests up to be blocking gates; if the regression suite fails, no code can be merged into the main branch.

Automated testing in CI/CD pipelines

Tests should kick off the moment a change is made. The teams that are really hitting their stride with these automated triggers tend to see a 50% jump in software delivery performance, the test suite is basically a tool to get them ahead of the game.

Pre-deployment validation gates

Regression suites serve as mandatory gates for your code. We believe that nothing should be merged into the main branch until every test passes.

Regression testing automation

Scheduled runs are necessary to catch issues from external library updates or API shifts. Boltic users find that intelligent automation requires this constant vigilance to remain stable.

Deployment smoke tests

Healthy connections are verified immediately after your code goes live:

- Application health is confirmed in the production environment.

- Connectivity is checked as a final safety measure.

Rollback validation

Reverting changes is practiced in staging long before a real emergency arises. You should treat the rollback mechanism as a vital feature that requires rigorous verification. A safe state must be restorable without data loss.

Monitoring and Observability in Testing

A failed test is pretty useless if we don't have a clear idea why it failed. When a test breaks, we want to be able to see what input variables were used. That turns a 'fail' signal into a useful debugging report.

Instrumentation for test visibility

You need clear visibility when a workflow fails. We recommend instrumenting every step to produce structured logs that provide deep context.

Log aggregation and analysis

Input variables and API response codes offer the necessary insights for your team. Detailed analysis turns a failure signal into a roadmap for a solution.

Metrics collection during tests

Long term trends are monitored during execution to identify performance shifts. You should catch degradation before it hits a timeout threshold.

Distributed tracing in test environments

Visualizing request paths through multiple services helps you pinpoint exactly where latency occurs. Boltic offers the intelligent oversight needed to handle these complex observations within your automated pipelines.

- Latency is identified across the entire stack.

Identifying flaky tests

Intermittent failures erode the confidence of your developers. We suggest isolating unstable tests immediately to ensure that every error signal is respected.

- Flaky signals damage team morale.

- Consistent results preserve developer trust.

- Inconsistent tests are repaired before returning to the suite.

Production Testing Strategies

Canary deployments and gradual rollouts

Deployment represents the beginning of a new validation phase. You should use canary rollouts to expose a fresh version to a small fraction of traffic. Errors trigger an automatic revert to limit the impact on your user base.

A/B testing workflow variations

Parallel versions help us optimize your business logic. You gain insights by comparing how different configurations perform under identical conditions. These trials provide data to support your long term strategy.

Shadow testing with production traffic

Intelligent platforms like Boltic allow you to run new logic alongside live data without influencing user outcomes. We compare these results to ensure accuracy before full deployment.

- Outputs are suppressed during the shadow run.

- Accuracy is verified against live production results.

Synthetic monitoring

Continuous health checks simulate user journeys to maintain a constant success signal. These automated probes find issues before your customers do. You ensure that primary paths remain operational 24/7.

Chaos engineering principles

Resilience is verified when we intentionally introduce latency or remove a pod. You gain the ultimate proof of stability through these controlled stresses. Observing the recovery process confirms that your architecture is truly robust. Mature observability turns these experiments into actionable improvements for your automated workflows.

Common Testing Challenges

Dealing with non deterministic behavior

Fuzzy matching helps us handle third party services that return varied results. You build flexibility into your retry logic to accommodate these unpredictable patterns.

Testing scheduled and time triggered workflows

Waiting an entire month to verify a report trigger is impossible. Boltic empowers you with time travel mechanisms to force execute these events during testing.

- Scheduled triggers are fired on demand.

Managing test environment costs

Cloud resources are billed by the second, so efficiency is mandatory. We recommend scheduling downtime for environments and utilizing mocking to reduce the financial footprint. Budget burn is prevented through these smart infrastructure choices.

Third party service testing limitations

Sandbox APIs often have low rate limits that hinder deep testing. Caching and mock services are used to avoid hitting these caps while still exercising your logic.

Maintaining test suite over time

Modular and readable code ensures your suite remains a tool rather than a burden. You should treat test code with the same respect as your production application.

- Refactoring is simplified by modular design.

- Feature development speed is preserved.

Testing Tools and Frameworks

The ecosystem of testing tools dictates what is possible. You find that Platform-native tools in systems like Logic Apps or n8n provide basic coverage. For more intelligent, AI-powered validation of your complex automated flows, we recommend Boltic. These tools serve as your first line of defense during the development cycle.

API testing platforms (Postman, Insomnia)

Scripting complex request chains is made possible by API platforms like Postman or Insomnia. We use these to simulate full user sessions across your connected services. Your integrations remain robust when every endpoint is verified through these detailed scripts.

Load testing tools (k6, JMeter)

Developers own performance testing code more easily when tools like k6 are used for JavaScript scenarios. JMeter allows us to flood your architecture with traffic to find the breaking point.

Contract testing (Pact)

Agreements between your microservices are managed by specialized frameworks like Pact. You prevent breaking changes by ensuring that providers and consumers always speak the same language.

Orchestration testing frameworks

Standard libraries like pytest are utilized by us for code-based orchestration in Python. We find that these frameworks offer the flexibility needed for custom logic.

Cloud-native testing services

Global traffic patterns are simulated via cloud-native testing services. You ensure that a user in Tokyo experiences the same performance as one in London. Parity across all regions is a core goal for your global infrastructure.

Quality Metrics and KPIs

Test coverage percentage

Coverage data reveals logic gaps in automated workflows. Confirming execution is the primary role here.

Defect escape rate

Bugs reaching production are quantified by the escape rate. Rising numbers demand an immediate audit of your scenarios because they have bypassed your guardrails.

Mean time to detect (MTTD)

Awareness of failures should arrive within minutes. We prioritize low detection times to protect the user experience.

Test execution time

Parallelize your suite to keep feedback cycles rapid. Speed preserves developer momentum during the automation process.

Flaky test ratio

Isolate intermittent failures to maintain suite health. Trust in your automation signals depends on a low flaky ratio.

Production incident correlation

High risk zones are revealed by correlating incidents with specific processes. Direct your investment toward the workflows causing the most outages. Targeted efforts ensure quality where it matters most. Success is found by focusing resources on your most vulnerable areas.

Fostering a Quality-First Culture

Test-driven development for workflows

Defining goals clearly happens when you write a test before building. We find this lean approach keeps development focused. You gain clarity by automating requirements first.

Shift-left testing approach

Testability is discussed during the design phase. We involve quality experts early to find gaps. Boltic helps you design intelligent workflows with validation in mind from the start.

Developer responsibility and ownership

The person building the feature owns the quality. You remain responsible for the health of your code.

QA and automation engineering collaboration

Engineers and QA professionals team up to cover every scenario. Happy paths and failure modes are explored together for robustness.

Continuous improvement practices

Your test suite is a living organism. Constant trimming ensures it supports the team. We refine these processes daily to maintain high standards. Performance is optimized through this regular maintenance of your automated assets.

Conclusion

Building a solid strategy for testing workflows is something you need to keep up with, not just do once and be done with it. Resilience is built by focusing on your most critical data paths first.

Creating a comprehensive test strategy

We start with core logic that drives revenue. Boltic enables you to expand these intelligent guardrails as your automation matures.

Balancing thoroughness and velocity

Prioritize what truly matters because testing every scenario is impossible. Maintaining this balance ensures development remains rapid. Stability is preserved through targeted checks.

Evolving tests with workflow complexity

Simple checks grow into complex simulations over time. Sophistication within your AI-powered workflows demands evolving strategies. We treat automation as a robust engine for growth.

Resources and community best practices

Shared knowledge strengthens your architecture. We design for inevitable failure to ensure fast recovery. Users remain unaware of disruptions because of your rigorous preparation. Continuous checking makes your automation a reliable driver for business progress. Your commitment to quality transforms potential vulnerabilities into strengths. Success is achieved through a persistent focus on operational health.

drives valuable insights

Organize your big data operations with a free forever plan

An agentic platform revolutionizing workflow management and automation through AI-driven solutions. It enables seamless tool integration, real-time decision-making, and enhanced productivity

Here’s what we do in the meeting:

- Experience Fynd Boltic's features firsthand.

- Learn how to automate your data workflows.

- Get answers to your specific questions.