Conversational AI did not start with large language models. It started with scripted bots. Early systems followed strict rules. If a user said X, the bot responded with Y. That was enough for simple workflows like checking order status or answering basic policy questions.

Then, machine learning improved intent detection. Bots became better at classifying queries and extracting entities such as dates or ticket numbers. This added flexibility. Still, these systems remained reactive. They waited for inputs and followed predefined logic.

The arrival of large language models changed the tone of interactions. Systems could now understand nuance. They could generate natural responses. Conversations felt smoother and less mechanical.

Now we are entering another stage. AI is no longer just responding. It is acting. That is where agentic AI comes in. To understand this shift clearly, we need to explore what makes an AI agent fundamentally different from what came before.

Why “agent” represents a fundamental shift

The word “assistant” suggests support. The word “agent” suggests autonomy.

An AI agent does not only answer questions. It works toward a defined goal. It decides what steps to take. It selects tools. It checks the results. It corrects itself when something fails.

This is a shift from conversation to execution. Instead of a simple exchange where a user asks and AI responds, the process becomes goal-driven:

- The user defines a goal

- The AI plans the steps

- The AI performs actions

- The AI checks the results

- The AI adapts if needed

That loop is what makes the difference. It introduces continuity and intention into the system.

This naturally raises a practical question. What does this shift mean for businesses and developers?

For businesses, automation can move beyond surface-level chat. Instead of answering questions, systems can complete tasks. That includes updating records, processing workflows, and coordinating across tools.

For developers, this means building systems that:

- Integrate with APIs

- Maintain short and long-term memory

- Execute multi-step workflows

- Handle uncertainty and edge cases

At Boltic, we are exploring how AI agents can fit into modern business processes without turning systems into black boxes. The focus stays on clarity, observability, and measurable outcomes rather than hype.

Chatbots: The first generation

Before we understand agents fully, it helps to look back at how chatbots evolved.

Rule-based and decision tree approaches

Early chatbots worked like flowcharts. They relied on predefined rules and structured decision trees. Each path was mapped in advance.

If a user selected option 1, they were taken to branch A. If they typed “refund,” they were routed to a refund flow. The logic was predictable and easy to audit.

This worked well for:

- FAQs

- Simple routing

- Basic customer support

- Appointment confirmations

When the workflow was stable and repetitive, chatbots performed reliably.

Intent classification and entity extraction

Later systems added machine learning. Instead of strict keyword matching, they could detect user intent. They could extract useful entities such as order IDs, dates, or account numbers.

This improved flexibility. Users no longer had to match exact phrases. But the architecture was still rigid underneath. Once the intent was identified, the bot followed a predefined path.

Limitations of Traditional Chatbots

Even though traditional chatbots played a major role in early automation, they come with structural constraints that are hard to overcome.

They are reactive by design. They respond, but they do not plan.

Use Cases Where Chatbots Still Make Sense

Despite these limitations, chatbots remain valuable in many environments. When workflows are predictable and structured, they are efficient and cost-effective.

The key is alignment. If the workflow is stable and rule-driven, chatbots remain a practical solution.

LLM-powered assistants: Generation two

The second generation of conversational AI emerged with large language models.

The GPT revolution in conversational AI

Large language models changed expectations. Systems powered by models like GPT can understand natural phrasing, generate human-like responses, and maintain conversational flow.

Users no longer need to follow a script. They can speak naturally. The assistant adapts.

Capabilities of LLM-powered assistants

These assistants can summarize long documents, draft emails, answer complex knowledge questions, and maintain short-term conversational context. They are flexible and expressive. This improved user experience significantly.

Remaining limitations

Even with strong language skills, assistants have limits like:

- They do not take actions by default.

- They lack persistent memory across sessions.

- They may hallucinate information.

- They still operate in a prompt-response loop.

The prompt-response paradigm

The interaction pattern remains simple:

- The user sends a prompt.

- The model generates a response.

- The interaction ends or continues.

- There is no built-in planning engine. No independent goal pursuit. No persistent operational state.

This is where AI agents go further. Now let us look at what makes these agents different!

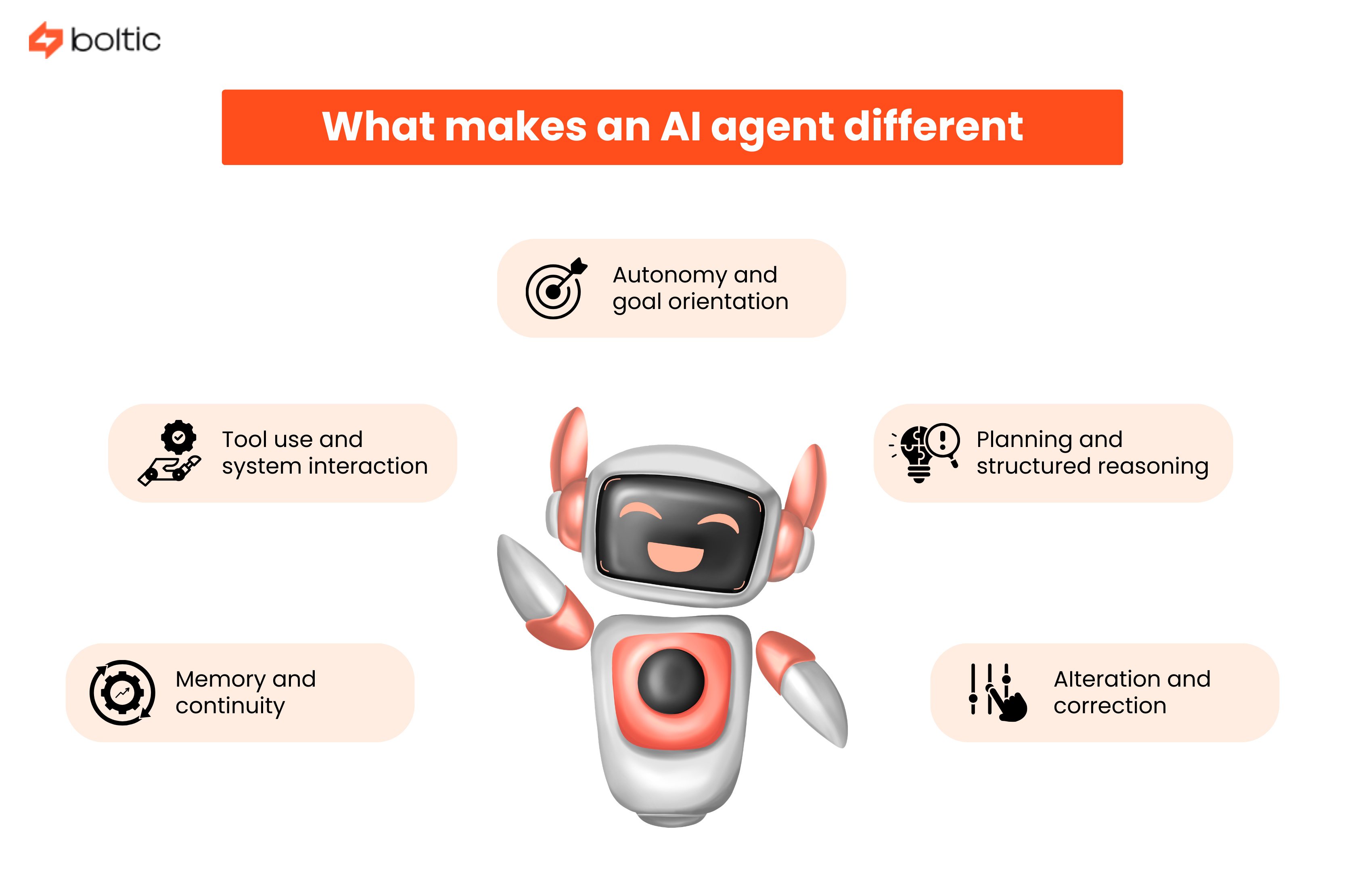

What makes an AI agent different

AI agents extend beyond language generation. They combine reasoning, memory, and action.

Autonomy and goal orientation

Agents operate around objectives. Instead of answering how to reset a password, they may detect the user’s account, trigger the reset, confirm completion, and notify relevant systems. They move from advice to execution.

Tool use and system interaction

Agents connect to external systems. They can call APIs, query databases, update CRMs, send notifications, or trigger workflows. This bridges the gap between conversation and business systems.

Planning and structured reasoning

Agents break tasks into steps. They analyze requirements, gather needed data, validate constraints, execute actions, and verify results.

This requires structured reasoning techniques such as Chain-of-Thought and ReAct loops.

Memory and continuity

Agents may maintain short-term memory during active tasks and long-term memory across sessions. They can remember user preferences and historical interactions. This enables continuity and personalization.

Iteration and correction

Agents observe outcomes and adjust. If a tool call fails, they retry or ask for clarification. If data is incomplete, they request missing details. This feedback loop improves reliability over time.

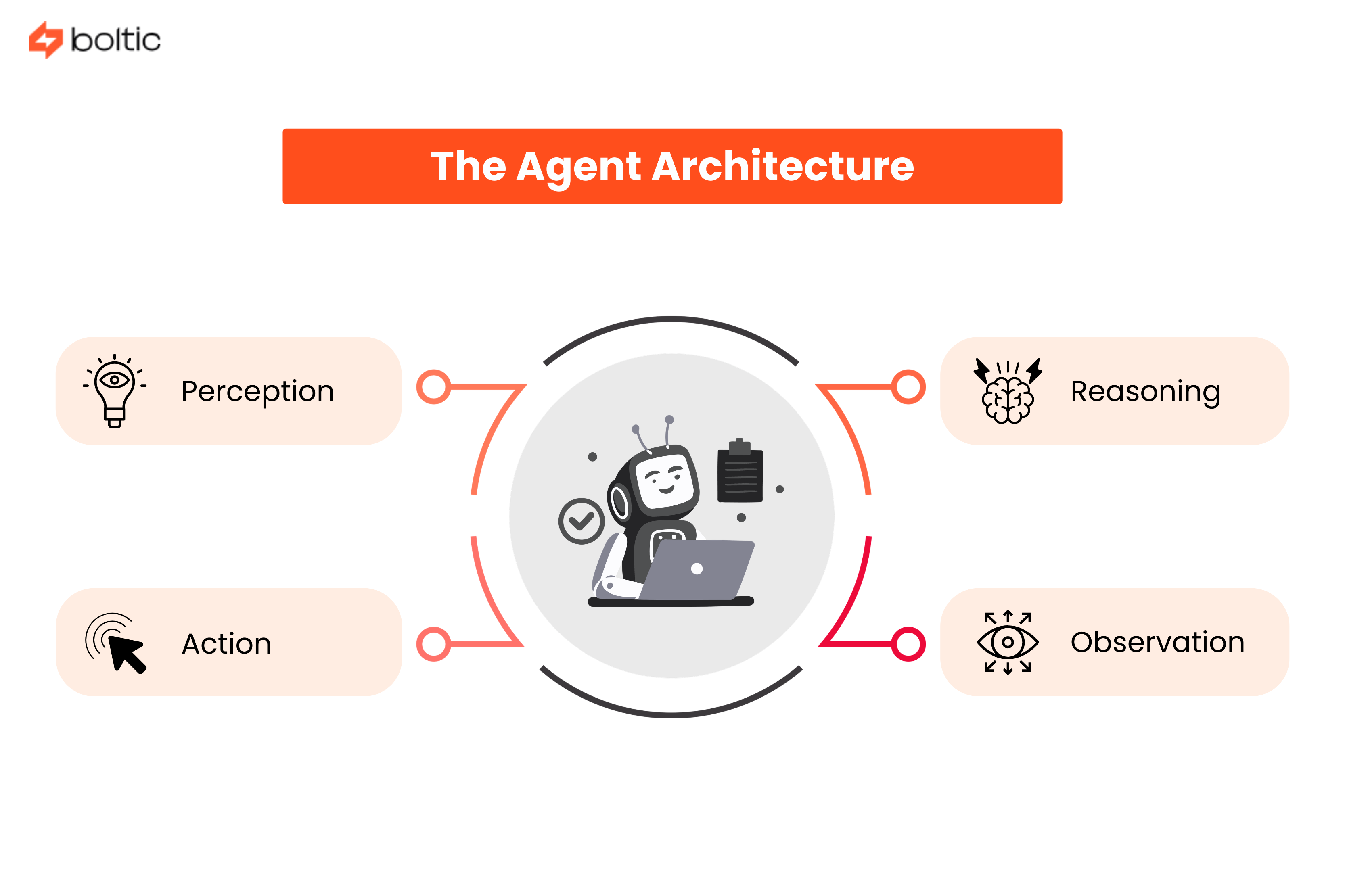

The agent architecture

Behind every agent is a structured architecture.

Perception

The agent interprets user input, contextual signals, system state, and constraints. This includes natural language understanding and mapping user goals to operational intent.

Reasoning

This layer decides what to do next. Techniques like Chain-of-Thought prompting help the model reason step by step. The ReAct pattern allows the system to alternate between reasoning and acting.

Action

The agent executes tasks using tool calls, API integrations, and system commands. Each action is structured and permission-bound.

Observation

After acting, the agent evaluates results. It checks responses, detects errors, and updates internal memory.

This perception-reason-action-observation loop continues until the goal is completed.

Tool Use and Function Calling

Tool use is central to agentic AI.

Agents rely on defined tools with structured schemas. Each tool includes a name, description, input format, and permission boundary. The agent selects tools based on the task context.

Complex workflows often require orchestration across multiple tools. For example, handling a customer complaint might involve retrieving account data, creating a ticket, issuing a refund, and sending confirmation.

Error handling is critical. Agents must retry intelligently or escalate when needed. Security boundaries must also be enforced through role-based access and authentication layers.

Without guardrails, autonomy becomes risky.

Planning and Reasoning Strategies

Planning transforms reactive systems into goal-driven ones.

- Task decomposition allows agents to split large objectives into smaller, manageable steps. This improves clarity and traceability.

- The ReAct pattern alternates between reasoning and acting. The agent thinks, acts, observes, and refines its plan.

- Tree of Thoughts expands reasoning into multiple branches before choosing the best path. This increases thoroughness but adds computational cost.

System designers must balance speed and depth. Too much reasoning increases latency. Too little increases error rates.

Memory Systems

Memory separates assistants from agents.

Short-term memory maintains task context during active sessions. Long-term memory stores historical interactions and user preferences.

Many systems use vector databases to retrieve relevant past information. Retrieval ranking ensures only useful context is injected into the reasoning process.

Privacy becomes important here. Teams must define retention duration, encryption standards, consent policies, and deletion mechanisms. Responsible memory management builds trust.

Real-World Agent Examples

Customer service agents can now diagnose issues, access order data, create tickets, and resolve straightforward cases fully.

Research agents gather information from multiple sources, synthesize findings, and produce structured summaries.

Coding agents write functions, execute tests, debug issues, and suggest improvements. They accelerate development but still require oversight.

Business process agents coordinate invoices, approvals, and internal workflows across systems. This is where enterprise adoption is growing steadily.

Building Your First Agent

Start small. That is the first step. Then follow these:

- Choose the right framework based on your technical expertise and needs.

- LangChain works well for structured tool orchestration. AutoGPT supports experimental autonomy.

- Custom architectures provide full control but require deeper engineering.

- Define a clear objective. Limit the tool set. Establish measurable success criteria.

- Implement tool schemas carefully. Secure API endpoints. Test in controlled environments.

Evaluation should include accuracy testing, edge case simulation, and latency measurement. Observability tools are essential to understand agent behavior.

Challenges and Limitations

Agents are powerful but not flawless. They come with their set of limitations too:

- Reliability can vary. Multi-step reasoning introduces complexity.

- Cost increases with deeper reasoning loops. Latency becomes noticeable in real-time systems.

- Safety and governance are ongoing concerns. Agents must operate within strict boundaries.

Sometimes simpler automation is better. If a deterministic workflow solves the problem reliably, an agent may not be necessary.

The Future of Agentic AI

Multi-agent systems are emerging. One agent may plan while another executes and a third verifies results.

Specialized agents may dominate enterprise use cases due to higher reliability in narrow domains.

Human-in-the-loop systems will remain common. Approval checkpoints and escalation paths help maintain control.

Best practices are becoming clearer. Start small. Measure outcomes. Restrict tool access. Continuously evaluate performance.

At Boltic, this practical mindset guides how agentic systems are approached. Integration and clarity matter more than novelty.

Conclusion

Agentic AI is not just better conversation. It is structured autonomy. Chatbots respond. Assistants generate. Agents act. This shift changes how businesses think about automation. Not every workflow needs an agent. But complex, multi-step processes benefit from systems that can reason, act, and adapt. Start with a defined scope. Integrate carefully. Monitor continuously. When designed thoughtfully, agentic AI becomes a dependable part of digital operations.

drives valuable insights

Organize your big data operations with a free forever plan

An agentic platform revolutionizing workflow management and automation through AI-driven solutions. It enables seamless tool integration, real-time decision-making, and enhanced productivity

Here’s what we do in the meeting:

- Experience Fynd Boltic's features firsthand.

- Learn how to automate your data workflows.

- Get answers to your specific questions.